Here’s an interesting question that just came in from a local scrum master. It’s about estimating tasks and management’s role in choosing the practices that a scrum team uses.

Here’s an interesting question that just came in from a local scrum master. It’s about estimating tasks and management’s role in choosing the practices that a scrum team uses.

Question

Chris,

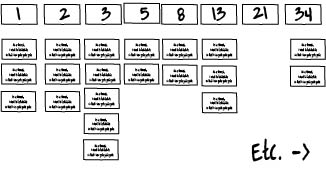

The team I am working with wants to do an experiment where they will stop estimating tasks in hours. Their sprint burn down will then be tasks vs. days instead of hours vs. days. The team believes that they will be successful with this and they are also thinking of creating an initial working agreement for this experiment e.g. any task that will be added will not be longer than a day of effort.

I am supporting this but somehow I have failed in explaining and convincing management. They want me to explain the benefits and the purpose of this experiment. They point to scrum books that say tasks should always be estimated in hours and a burn down chart can only be shown using hours. How do I convince management to allow the team to proceed with this experiment?

Read the full article…

How long should our sprints be? This is a question I am frequently asked by new scrum masters and scrum teams. Here is how it showed up in my in-box recently.

How long should our sprints be? This is a question I am frequently asked by new scrum masters and scrum teams. Here is how it showed up in my in-box recently. I was inspired to create a retrospective game for agile teams, based on the game Dixit.

I was inspired to create a retrospective game for agile teams, based on the game Dixit.  A team without a woman is like a bicycle with… some fish? So it would seem, according to Grace Nasri, who writes

A team without a woman is like a bicycle with… some fish? So it would seem, according to Grace Nasri, who writes Our friend and colleague

Our friend and colleague